Electhical is an industry forum whose focus is achieving a low total footprint for electronics. It is being held on Friday December 10th at Churchill College, Cambridge. The speakers are from government, industry and academia; they include executives and experts on technology policy, consumer electronics, manufacturing, security and privacy. It’s sponsored by ARM, IEEE, IEEE CAS and Churchill College; registration is free.

Category Archives: Seminars

Security engineering and machine learning

Last week I gave my first lecture in Edinburgh since becoming a professor there in February. It was also the first talk I’ve given in person to a live audience since February 2020.

My topic was the interaction between security engineering and machine learning. Many of the things that go wrong with machine-learning systems were already familiar in principle, as we’ve been using Bayesian techniques in spam filters and fraud engines for almost twenty years. Indeed, I warned about the risks of not being able to explain and justify the decisions of neural networks in the second edition of my book, back in 2008.

However the deep neural network (DNN) revolution since 2012 has drawn in hundreds of thousands of engineers, most of them without this background. Many fielded systems are extremely easy to break, often using tricks that have been around for years. What’s more, new attacks specific to DNNs – adversarial samples – have been found to exist for pretty well all models. They’re easy to find, and often transferable from one model to another.

I describe a number of new attacks and defences that we’ve discovered in the past three years, including the Taboo Trap, sponge attacks, data ordering attacks and markpainting. I argue that we will usually have to think of defences at the system level, rather than at the level of individual components; and that situational awareness is likely to play an important role.

Here now is the video of my talk.

SHB Seminar

The SHB seminar on November 5th was kicked off by Tom Holt, who’s discovered a robust underground market in identity documents that are counterfeit or fraudulently obtained. He’s been scraping both websites and darkweb sites for data and analysing how people go about finding, procuring and using such credentials. Most vendors were single-person operators although many operate within affiliate programs; many transactions involved cryptocurrency; many involve generating pdfs that people can print at home and that are good enough for young people to drink alcohol. Curiously, open web products seem to cost twice as much as dark web products.

Next was Jack Hughes, who has been studying the contract system introduced by hackforums in 2018 and made mandatory the following year. This enabled him to analyse crime forum behaviour before and during the covid-19 era. How do new users become active, and build up trust? How does it evolve? He collected 200,000 transactions and analysed them. The contract mandate stifled growth quickly, leading to a first peak; covid caused a second. The market was already centralised, and became more so with the pandemic. However contracts are getting done faster, and the main activity is currency exchange: it seems to be working as a cash-out market.

Anita Lavorgna has been studying the discourse of groups who oppose public mask mandates. Like the antivaxx movement, this can draw in fringe groups and become a public-health issue. She collected 23654 tweets from February to June 2020. There’s a diverse range of voices from different places on the political spectrum but with a transversal theme of freedom from government interference. Groups seek strength in numbers and seek to ally into movements, leading to the mask becoming a symbol of political identity construction. Anita found very little interaction between the different groups: only 144 messages in total.

Simon Parkin has been working on how we can push back on bad behaviours online while they are linked with good behaviours that we wish to promote. Precision is hard as many of the desirable behaviours are not explicitly recognised as such, and as many behaviours arise as a combination of personal incentives and context. The best way forward is around usability engineering – making the desired behaviours easier.

Bruce Schneier was the final initial speaker, and his topic was covid apps. The initial rush of apps that arrived in March through June have known issues around false positives and false negatives. We’ve also used all sorts of other tools, such as analysis of Google maps to measure lockdown compliance. The third thing is the idea of an immunity passport, saying you’ve had the disease, or a vaccine. That will have the same issues as the fake IDs that Tom talked about. Finally, there’s compliance tracking, where your phone monitors you. The usual countermeasures apply: consent, minimisation, infosec, etc., though the trade-offs might be different for a while. A further bunch of issues concern home working and the larger attack surface that many firms have as a result of unfamiliar tools, less resistance to being tols to do things etc.

The discussion started on fake ID; Tom hasn’t yet done test purchases, and might look at fraudulently obtained documents in the future, as opposed to completely counterfeit ones. Is hackforums helping drug gangs turn paper into coin? This is not clear; more is around cashing out cybercrime rather than street crime. There followed discussion by Anita of how to analyse corpora of tweets, and the implications for policy in real life. Things are made more difficult by the fact that discussions drift off into other platforms we don’t monitor. Another topic was the interaction of fashion: where some people wear masks or not as a political statement, many more buy masks that get across a more targeted statement. Fashion is really powerful, and tends to be overlooked by people in our field. Usability research perhaps focuses too much on the utilitarian economics, and is a bit of a blunt instrument. Another example related to covid is the growing push for monitoring software on employees’ home computers. Unfortunately Uber and Lyft bought a referendum result that enables them to not treat their staff in California as employees, so the regulation of working hours at home will probably fall to the EU. Can we perhaps make some input into what that should look like? Another issue with the pandemic is the effect on information security markets: why should people buy corporate firewalls when their staff are all over the place? And to what extent will some of these changes be permanent, if people work from home more? Another thread of discussion was how the privacy properties of covid apps make it hard for people to make risk-management decisions. The apps appear ineffective because they were designed to do privacy rather than to do public health, in various subtle ways; giving people low-grade warnings which do not require any action appear to be an attempt to raise public awareness, like mask mandates, rather than an effective attempt to get exposed individuals to isolate. Apps that check people into venues have their own issues and appear to be largely security theatre. Security theatre comes into its own where the perceived risk is much greater than the actual risk; covid is the opposite. What can be done in this case? Targeted warnings? Humour? What might happen when fatigue sets in? People will compromise compliance to make their lives bearable. That can be managed to some extent in institutions like universities, but in society it will be harder. We ended up with the suggestion that the next SHB seminar should be in February, which should be the low point; after that we can look forward to things getting better, and hopefully to a meeting in person in Cambridge on June 3-4 2021.

Fourth Annual Cybercrime Conference

The Cambridge Cybercrime Centre is organising another one day conference on cybercrime on Thursday, 11th July 2019.

We have a stellar group of invited speakers who are at the forefront of their fields:

- Jamie Saunders, UCL Department of Security and Crime Science

- Sergio Pastrana, Universidad Carlos III de Madrid

- Victoria Wang, University of Portsmouth

- Jack Hughes, Computer Laboratory, University of Cambridge

- Greg Francis, National Crime Agency

- Ugur Akyazi, Technische Universiteit Delft

- Qiu-Hong Wang, Singapore Management University

- Leonie Tanczer, University College London

- Diego Silva, University of Oxford

- Ben Collier, Cambridge Cybercrime Centre, University of Cambridge

- Richard Clayton, Cambridge Cybercrime Centre, University of Cambridge

They will present various aspects of cybercrime from the point of view of criminology, policy, security economics and policing.

This one day event, to be held in the Faculty of Law, University of Cambridge will follow immediately after (and will be in the same venue as) the “12th International Conference on Evidence Based Policing” organised by the Institute of Criminology which runs on the 9th and 10th July 2018.

Full details (and information about booking) is here.

FIPR 20th birthday

The FIPR 20th birthday seminar is taking place right now in the Cambridge Computer Lab, and the livestream is here.

Enjoy!

I may or may not find time to liveblog the sessions in followups…

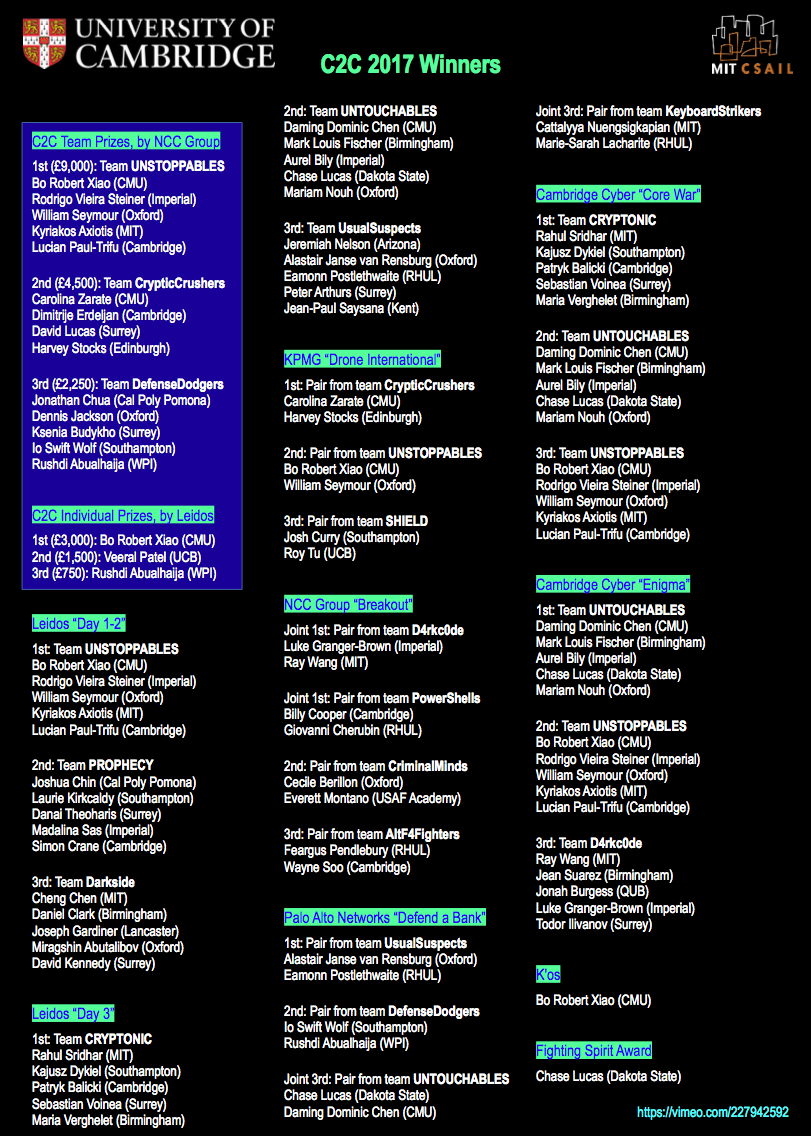

Cambridge2Cambridge 2017

Following on from various other similar events we organised over the past few years, last week we hosted our largest ethical hacking competition yet, Cambridge2Cambridge 2017, with over 100 students from some of the best universities in the US and UK working together over three days. Cambridge2Cambridge was founded jointly by MIT CSAIL (in Cambridge Massachusetts) and the University of Cambridge Computer Laboratory (in the original Cambridge) and was first run at MIT in 2016 as a competition involving only students from these two universities. This year it was hosted in Cambridge UK and we broadened the participation to many more universities in the two countries. We hope in the future to broaden participation to more countries as well.

Cambridge 2 Cambridge 2017 from Frank Stajano Explains on Vimeo.

We assigned the competitors to teams that were mixed in terms of both provenance and experience. Each team had competitors from US and UK, and no two people from the same university; and each team also mixed experienced and less experienced players, based on the qualifier scores. We did so to ensure that even those who only started learning about ethical hacking when they heard about this competition would have an equal chance of being in the team that wins the gold. We then also mixed provenance to ensure that, during these three days, students collaborated with people they didn’t already know.

Despite their different backgrounds, what the attendees had in common was that they were all pretty smart and had an interest in cyber security. It’s a safe bet that, ten or twenty years from now, a number of them will probably be Security Specialists, Licensed Ethical Hackers, Chief Security Officers, National Security Advisors or other high calibre security professionals. When their institution or country is under attack, they will be able to get in touch with the other smart people they met here in Cambridge in 2017, and they’ll be in a position to help each other. That’s why the defining feature of the event was collaboration, making new friends and having fun together. Unlike your standard one-day hacking contest, the ambitious three-day programme of C2C 2017 allowed for social activities including punting on the river Cam, pub crawling and a Harry Potter style gala dinner in Trinity College.

In between competition sessions we had a lively and inspirational “women in cyber” panel, another panel on “securing the future digital society”, one on “real world pentesting” and a careers advice session. On the second day we hosted several groups of bright teenagers who had been finalists in the national CyberFirst Girls Competition. We hope to inspire many more women to take up a career path that has so far been very male-dominated. More broadly, we wish to inspire many young kids, girls or boys, to engage in the thrilling challenge of unravelling how computers work (and how they fail to work) in a high-stakes mental chess game of adversarial attack and defense.

Our platinum sponsors Leidos and NCC Group endowed the competition with over £20,000 of cash prizes, awarded to the best 3 teams and the best 3 individuals. Besides the main attack-defense CTF, fought on the Leidos CyberNEXS cyber range, our other sponsors offered additional competitions, the results of which were combined to generate the overall teams and individual scores. Here is the leaderboard, showing how our contestants performed. Special congratulations to Bo Robert Xiao of Carnegie Mellon University who, besides winning first place in both team and individuals, also went on to win at DEF CON in team PPP a couple of days later.

We are grateful to our supporters, our sponsors, our panelists, our guests, our staff and, above all, our 110 competitors for making this event a success. It was particularly pleasing to see several students who had already taken part in some of our previous competitions (special mention for Luke Granger-Brown from Imperial who earned medals at every visit). Chase Lucas from Dakota State University, having passed the qualifier but not having picked in the initial random selection, was on the reserve list in case we got funding to fly additional students; he then promptly offered to pay for his own airfare in order to be able to attend! Inter-ACE 2017 winner Io Swift Wolf from Southampton deserted her own graduation ceremony in order to participate in C2C (!), and then donated precious time during the competition to the CyberFirst girls who listened to her rapturously. Accumulating all that good karma could not go unrewarded, and indeed you can once again find her name in the leaderboard above. And I’ve only singled out a few, out of many amazing, dynamic and enthusiastic young people. Watch out for them: they are the ones who will defend the future digital society, including you and your family, from the cyber attacks we keep reading about in the media. We need many more like them, and we need to put them in touch with each other. The bad guys are organised, so we have to be organised too.

The event was covered by Sky News, ITV, BBC World Service and a variety of other media, which the official website and twitter page will undoubtedly collect in due course.

Cambridge Cybercrime Conference

I’m sitting in the Inaugural Cybercrime Conference of the Cambridge Cloud Cybercrime Centre, and will attempt to liveblog the talks in followups to this post.

More on the IP bill

I’m in a symposium at Churchill College on the Investigatory Powers Bill. It’s organised by John Naughton and I’ll be speaking later on equipment interference, a topic on which I wrote an expert report for the recent IP Tribunal case brought by Privacy International. Meanwhile I’ll try to liveblog the event in followups to this post.

Scrambling for Safety X

Today I’m at the tenth Scrambling for Safety which is being held at Kings College London. Sorry, all the tickets are sold out, but there is a video feed available from the Open Rights Group website.

Passwords 2015 at Cambridge

A very exciting Passwords 2015 is being hosted at the Computer Laboratory from 7 to 9 December. A unique conference that brings together the world’s top password hackers and academics. It is being liveblogged by the participants on Twitter with the hashtag #passwords15. A live feed is available on the Passwords 2015 page.