I’m at Financial Crypto 2018 and will try to liveblog some of the sessions in followups to this post.

Category Archives: Privacy technology

What Goes Around Comes Around

What Goes Around Comes Around is a chapter I wrote for a book by EPIC. What are America’s long-term national policy interests (and ours for that matter) in surveillance and privacy? The election of a president with a very short-term view makes this ever more important.

While Britain was top dog in the 19th century, we gave the world both technology (steamships, railways, telegraphs) and values (the abolition of slavery and child labour, not to mention universal education). America has given us the motor car, the Internet, and a rules-based international trading system – and may have perhaps one generation left in which to make a difference.

Lessig taught us that code is law. Similarly, architecture is policy. The architecture of the Internet, and the moral norms embedded in it, will be a huge part of America’s legacy, and the network effects that dominate the information industries could give that architecture great longevity.

So if America re-engineers the Internet so that US firms can microtarget foreign customers cheaply, so that US telcos can extract rents from foreign firms via service quality, and so that the NSA can more easily spy on people in places like Pakistan and Yemen, then in 50 years’ time the Chinese will use it to manipulate, tax and snoop on Americans. In 100 years’ time it might be India in pole position, and in 200 years the United States of Africa.

My book chapter explores this topic. What do the architecture of the Internet, and the network effects of the information industries, mean for politics in the longer term, and for human rights? Although the chapter appeared in 2015, I forgot to put it online at the time. So here it is now.

Is this research ethical?

The Economist features face recognition on its front page, reporting that deep neural networks can now tell whether you’re straight or gay better than humans can just by looking at your face. The research they cite is a preprint, available here.

Its authors Kosinski and Wang downloaded thousands of photos from a dating site, ran them through a standard feature-extraction program, then classified gay vs straight using a standard statistical classifier, which they found could tell the men seeking men from the men seeking women. My students pretty well instantly called this out as selection bias; if gay men consider boyish faces to be cuter, then they will upload their most boyish photo. The paper authors suggest their finding may support a theory that sexuality is influenced by fetal testosterone levels, but when you don’t control for such biases your results may say more about social norms than about phenotypes.

Quite apart from the scientific value of the research, which is perhaps best assessed by specialists, I’m concerned with the ethics and privacy aspects. I am surprised that the paper doesn’t report having been through ethical review; the authors consider that photos on a dating website are public information and appear to assume that privacy issues simply do not arise.

Yet UK courts decided, in Campbell v Mirror, that privacy could be violated even by photos taken on the public street, and European courts have come to similar conclusions in I v Finland and elsewhere. For example, a Catholic woman is entitled to object to the use of her medical record in research on abortifacients and contraceptives even if the proposed use is fully anonymised and presents no privacy risk whatsoever. The dating site users would be similarly entitled to object to their photos being used in research to which they might have an ethical objection, even if they could not be identified from their photos. There are surely going to be people who object to research in any nature vs nurture debate, especially on a charged topic such as sexuality. And the whole point of the Economist’s coverage is that face-recognition technology is now good enough to work at population scale.

What do LBT readers think?

Security Protocols 2017

I’m at the twenty-fifth Security Protocols Workshop, of which the theme is protocols with multiple objectives. I’ll try to liveblog the talks in followups to this post.

Government U-turn on Health Privacy

Now that everyone’s distracted with the supreme court case on Brexit, you can expect the government to sneak out something it’s ashamed of. Health secretary Jeremy Hunt has decided to ignore the wishes of over a million people who opted out of having their hospital records given to third parties such as drug companies, and the ICO has decided to pretend that the anonymisation mechanisms he says he’ll use instead are sufficient. One gently smoking gun is the fifth bullet in a new webpage here, where the Department of Health claims that when it says the data are anonymous, your wishes will be ignored. The news has been broken in an article in the Health Services Journal (it’s behind a paywall, as a splendid example of transparency) with the Wellcome Trust praising the ICO’s decision not to take action against the Department. We are assured that “the data is seen as crucial for vital research projects”. The exchange of letters with privacy campaigners that led up to this decision can be found here, here, here, here, here, here, and here.

An early portent of this u-turn was reported here in 2014 when officials reckoned that the only way they could still do administrative tasks such as calculating doctors’ bonuses was to just pretend that the data are anonymous even though they know it isn’t really. Then, after the care.data scandal showed that a billion records had been sold to over a thousand purchasers, we reported here how HES data had also been sold and how the minister seemed to have misled parliament about this.

I will be talking about ethics of all this on Thursday. Even if ministers claim that stolen medical records are OK to use, researchers must not act as if this is true; if patients end up trusting doctors as little as we trust politicians, then medical research will be in serious trouble. There is a video of a previous version of this talk here.

Meanwhile, if you’re annoyed that Jeremy Hunt proposes to ignore not just your privacy rights but your express wishes, you can send him a notice under Section 10 of the Data Protection Act forbidding him from disclosing your data. The Department has complied with such notices in the past, albeit with bad grace as they have no automated way to do it. If thousands of people serve such notices, they may finally have to stand up to the drug company lobbyists and write the missing software. For more, see here.

Hacking the iPhone PIN retry counter

At our security group meeting on the 19th August, Sergei Skorobogatov demonstrated a NAND backup attack on an iPhone 5c. I typed in six wrong PINs and it locked; he removed the flash chip (which he’d desoldered and led out to a socket); he erased and restored the changed pages; he put it back in the phone; and I was able to enter a further six wrong PINs.

Sergei has today released a paper describing the attack.

During the recent fight between the FBI and Apple, FBI Director Jim Comey said this kind of attack wouldn’t work.

USENIX Security Best Paper 2016 – The Million Key Question … Origins of RSA Public Keys

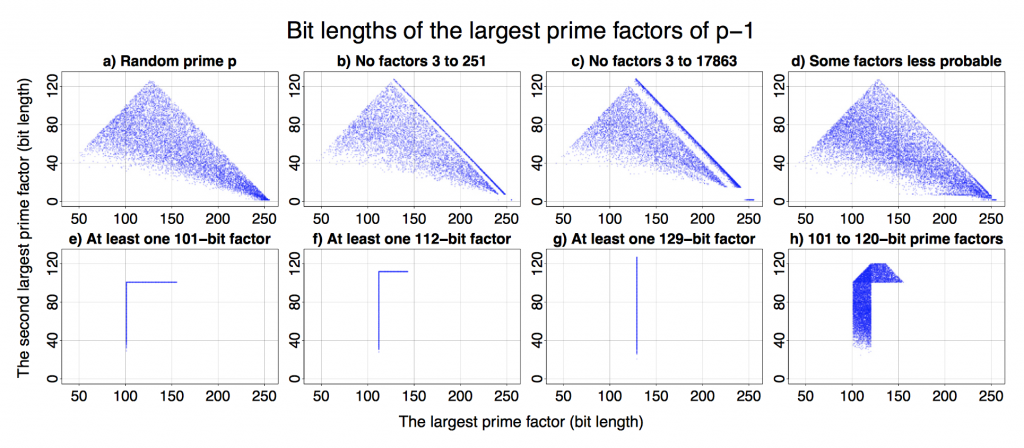

Petr Svenda et al from Masaryk University in Brno won the Best Paper Award at this year’s USENIX Security Symposium with their paper classifying public RSA keys according to their source.

I really like the simplicity of the original assumption. The starting point of the research was that different crypto/RSA libraries use slightly different elimination methods and “cut-off” thresholds to find suitable prime numbers. They thought these differences should be sufficient to detect a particular cryptographic implementation and all that was needed were public keys. Petr et al confirmed this assumption. The best paper award is a well-deserved recognition as I’ve worked with and followed Petr’s activities closely.

The authors created a method for efficient identification of the source (software library or hardware device) of RSA public keys. It resulted in a classification of keys into more than dozen categories. This classification can be used as a fingerprint that decreases the anonymity of users of Tor and other privacy enhancing mailers or operators.

All that is a result of an analysis of over 60 million freshly generated keys from 22 open- and closed-source libraries and from 16 different smart-cards. While the findings are fairly theoretical, they are demonstrated with a series of easy to understand graphs (see above).

I can’t see an easy way to exploit the results for immediate cyber attacks. However, we started looking into practical applications. There are interesting opportunities for enterprise compliance audits, as the classification only requires access to datasets of public keys – often created as a by-product of internal network vulnerability scanning.

An extended version of the paper is available from http://crcs.cz/rsa.

Yet another Android side channel: input stealing for fun and profit

At PETS 2016 we presented a new side-channel attack in our paper Don’t Interrupt Me While I Type: Inferring Text Entered Through Gesture Typing on Android Keyboards. This was part of Laurent Simon‘s thesis, and won him the runner-up to the best student paper award.

We found that software on your smartphone can infer words you type in other apps by monitoring the aggregate number of context switches and the number of hardware interrupts. These are readable by permissionless apps within the virtual procfs filesystem (mounted under /proc). Three previous research groups had found that other files under procfs support side channels. But the files they used contained information about individual apps– e.g. the file /proc/uid_stat/victimapp/tcp_snd contains the number of bytes sent by “victimapp”. These files are no longer readable in the latest Android version.

We found that the “global” files – those that contain aggregate information about the system – also leak. So a curious app can monitor these global files as a user types on the phone and try to work out the words. We looked at smartphone keyboards that support “gesture typing”: a novel input mechanism democratized by SwiftKey, whereby a user drags their finger from letter to letter to enter words.

This work shows once again how difficult it is to prevent side channels: they come up in all sorts of interesting and unexpected ways. Fortunately, we think there is an easy fix: Google should simply disable access to all procfs files, rather than just the files that leak information about individual apps. Meanwhile, if you’re developing apps for privacy or anonymity, you should be aware that these risks exist.

PETS 2016

I am at the Privacy Enhancing Technologies Symposium (PETS 2016) in Darmstadt until Friday, and will try to liveblog some of the sessions in followups to this post. (I can’t do them all as there are some parallel sessions.)

Royal Society report on cybersecurity research

The Royal Society has just published a report on cybersecurity research. I was a member of the steering group that tried to keep the policy team headed in the right direction. Its recommendation that governments preserve the robustness of encryption is welcome enough, given the new Russian law on access to crypto keys; it was nice to get, given the conservative nature of the Society. But I’m afraid the glass is only half full.

I was disappointed that the final report went along with the GCHQ line that security breaches should not be reported to affected data subjects, as in the USA, but to the agencies, as mandated in the EU’s NIS directive. Its call for an independent review of the UK’s cybersecurity needs may also achieve little. I was on John Beddington’s Blackett Review five years ago, and the outcome wasn’t published; it was mostly used to justify a budget increase for GCHQ. Its call for UK government work on standards is irrelevant post-Brexit; indeed standards made in Europe will probably be better without UK interference. Most of all, I cannot accept the report’s line that the government should help direct cybersecurity research. Most scientists agree that too much money already goes into directed programmes and not enough into responsive-mode and curiosity-driven research. In the case of security research there is a further factor: the stark conflict of interest between bona fide researchers, whose aim is that some of the people should enjoy some security and privacy some of the time, and agencies engaged in programmes such as Operation Bullrun whose goal is that this should not happen. GCHQ may want a “more responsive cybersecurity agenda”; but that’s the last thing people like me want them to have.

The report has in any case been overtaken by events. First, Brexit is already doing serious harm to research funding. Second, Brexit is also doing serious harm to the IT industry; we hear daily of listings posptoned, investments reconsidered and firms planning to move development teams and data overseas. Third, the Investigatory Powers bill currently before the House of Lords highlights the fact that surveillance debate in the West these days is more about access to data at rest and about whether the government can order firms to hack their customers.

While all three arms of the US government have drawn back on surveillance powers following the Snowden revelations, Theresa May has taken the hardest possible line. Her Investigatory Powers Bill will give her successors as Home Secretary sweeping powers to order firms in the UK to hand over data and help GCHQ hack their customers. Brexit will shield these powers from challenge in the European Court of Justice, making it much harder for a UK company to claim “adequacy” for its data protection arrangements in respect of EU data subjects. This will make it still less attractive for an IT company to keep in the UK either data that could be seized or engineering staff who could be coerced. I am seriously concerned that, together with Brexit, this will be the double whammy that persuades overseas firms not to invest in the UK, and that even causes some UK firms to leave. In the face of this massive self-harm, the measures suggested by the report are unlikely to help much.