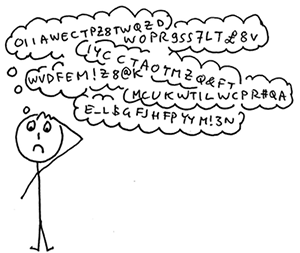

When you are a medical doctor, friends and family invariably ask you about their aches and pains. When you are a computer specialist, they ask you to fix their computer. About ten years ago, most of the questions I was getting from friends and family as a security techie had to do with frustration over passwords. I observed that what techies had done to the rest of humanity was not just wrong but fundamentally unethical: asking people to do something impossible and then, if they got hacked, blaming them for not doing it.

So in 2011, years before the Fido Alliance was formed (2013) and Apple announced its smartwatch (2014), I published my detailed design for a clean-slate password replacement I called Pico, an alternative system intended to be easier to use and more secure than passwords. The European Research Council was generous enough to fund my vision with a grant that allowed me to recruit and lead a team of brilliant researchers over a period of five years. We built a number of prototypes, wrote a bunch of papers, offered projects to a number of students and even launched a start-up and thereby learnt a few first-hand lessons about business, venture capital, markets, sales and the difficult process of transitioning from academic research to a profitable commercial product. During all those years we changed our minds a few times about what ought to be done and we came to understand a lot better both the problem space and the mindset of the users.