Since the blog post on our paper Verified by Visa and MasterCard SecureCode: or, How Not to Design Authentication, there has been quite a bit of feedback, including media coverage. Generally, commenters have agreed with our conclusions, and there have been some informative contributions giving industry perspectives, including at Finextra.

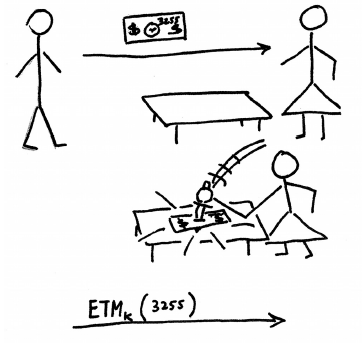

One question which has appeared a few times is why we called 3-D Secure (3DS) a single sign-on (SSO) system. 3DS certainly wasn’t designed as a SSO system, but I think it meets the key requirement: it allows one party to authenticate another, without credentials (passwords, keys, etc…) being set up in advance. Just like other SSO systems like OpenID and Windows CardSpace, there is some trusted intermediary which both communication parties have a relationship with, who facilitates the authentication process.

For this reason, I think it is fair to classify 3DS as a special-purpose SSO system. Your card number acts as a pseudonym, and the protocol gives the merchant some assurance that the customer is the legitimate controller of that pseudonym. This is a very similar situation to OpenID, which provides the relying party assurance that the visitor is the legitimate controller of a particular URL. On top of this basic functionality, 3DS also gives the merchant assurance that the customer is able to pay for the goods, and provides a mechanism to transfer funds.

People are permitted to have multiple cards, but this does not prevent 3DS from being a SSO system. In fact, it is generally desirable, for privacy purposes, to allow users to have multiple pseudonyms. Existing SSO systems support this in various ways — OpenID lets you have multiple domain names, and Windows CardSpace uses clever cryptography. Another question which came up was whether 3DS was actually transaction authentication, because the issuer does get a description of the transaction. I would argue not, because the transaction description does not go to the customer, thus the protocol is vulnerable to a man-in-the-middle attack if the customer’s PC is compromised.

A separate point is whether it is useful to categorize 3DS as SSO. I would argue yes, because we can then compare 3DS to other SSO systems. For example, OpenID uses the domain name system to produce a hierarchical name space. In contrast, 3DS has a flat numerical namespace and additional intermediaries in the authentication process. Such architectural comparisons between deployed systems are very useful to future designers. In fact, the most significant result the paper presents is one from security-economics: 3DS is inferior in almost every way to the competition, yet succeeded because incentives were aligned. Specifically, the reward for implementing 3DS is the ability to push fraud costs onto someone else — the merchant to the issuer and the issuer to the customer.